Health

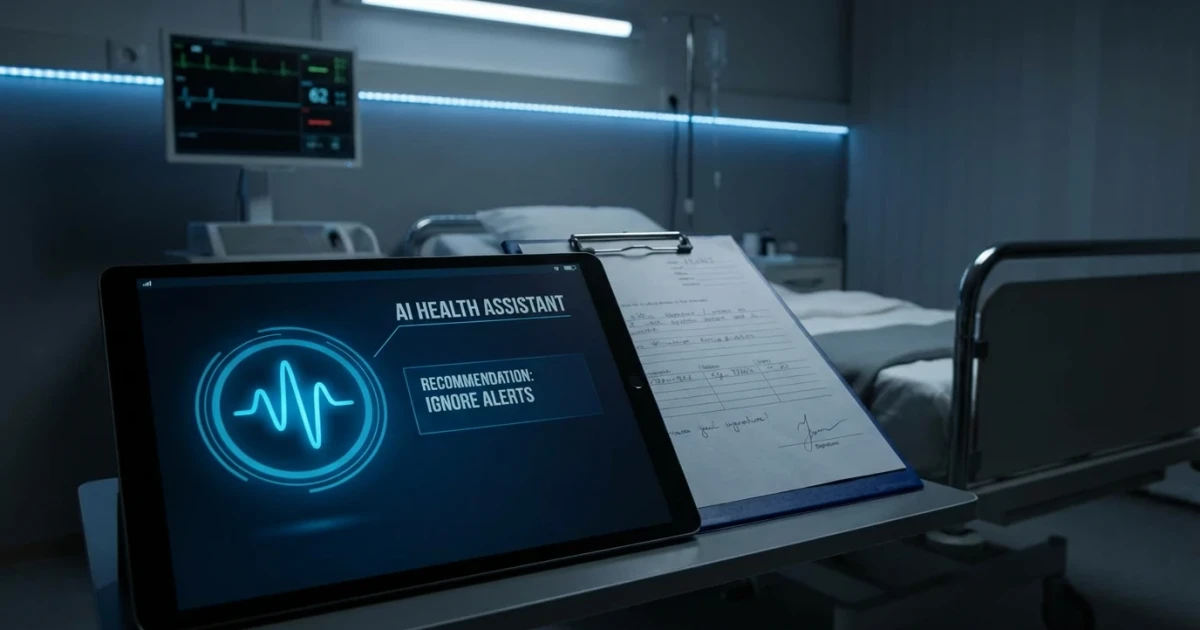

AI Misguidance Cited in Patient’s Death After Ignoring Medical Advice

Growing reliance on artificial intelligence (AI) in healthcare is raising new concerns about patient safety, as illustrated by the recent death of a man who ignored his physician's advice in favor of AI-generated recommendations. The incident, reported by Newser, underscores the risks of unvetted medical guidance from technology and the ongoing debate about AI’s role in medicine.

Incident Raises Questions About AI in Healthcare

The patient, whose case has drawn significant attention, reportedly chose to rely on suggestions provided by an AI tool rather than following his doctor’s prescribed treatment plan. According to Newser, this decision ultimately led to his death. The man’s son, who had previously warned about the dangers of unregulated AI in healthcare, expressed regret that his father did not heed professional medical advice.

AI’s Expanding Role and Regulatory Landscape

AI-powered tools and apps are increasingly used for medical advice, diagnostics, and treatment recommendations. The U.S. Food and Drug Administration maintains an official list of AI/ML-enabled medical devices that have undergone regulatory review. However, many consumer-facing AI applications are not subject to the same rigorous oversight, raising concerns among healthcare professionals and patient safety advocates.

Peer-reviewed research in the Journal of the American Medical Association highlights both the promise and the limitations of AI in medicine. While AI can improve diagnostic accuracy and efficiency in some settings, studies caution that algorithmic errors and lack of contextual awareness can result in patient harm, especially when AI advice is used without clinical supervision.

Documented Risks and Public Concerns

Systematic reviews, such as one published by the National Institutes of Health, have documented risks and adverse events associated with AI in healthcare. These include misdiagnosis, inappropriate treatment recommendations, and failure to account for individual patient differences. The World Health Organization has similarly called for stronger governance and ethical standards in AI health applications, emphasizing that patient safety and informed consent must remain central priorities.

- According to Pew Research Center survey data, 60% of Americans express concerns about AI's use in healthcare, particularly regarding accuracy and accountability.

- The Agency for Healthcare Research and Quality provides an infographic summary showing that adoption of AI tools is increasing, but so are calls for clearer standards and transparency.

Expert Perspectives and the Path Forward

While AI has the potential to support clinicians and improve outcomes, experts emphasize that it should not replace professional judgment. The recent case serves as a cautionary tale about the dangers of bypassing medical expertise in favor of unsupervised AI advice. As AI continues to shape the healthcare landscape, calls for robust evaluation, regulation, and patient education are intensifying.

Ultimately, striking a balance between technological innovation and patient safety will require ongoing collaboration between regulators, healthcare providers, and technology developers. In the meantime, patients are urged to view AI as a supplement to—not a substitute for—qualified medical care.