Business

Anthropic CEO Meets White House as AI Security Concerns Mount

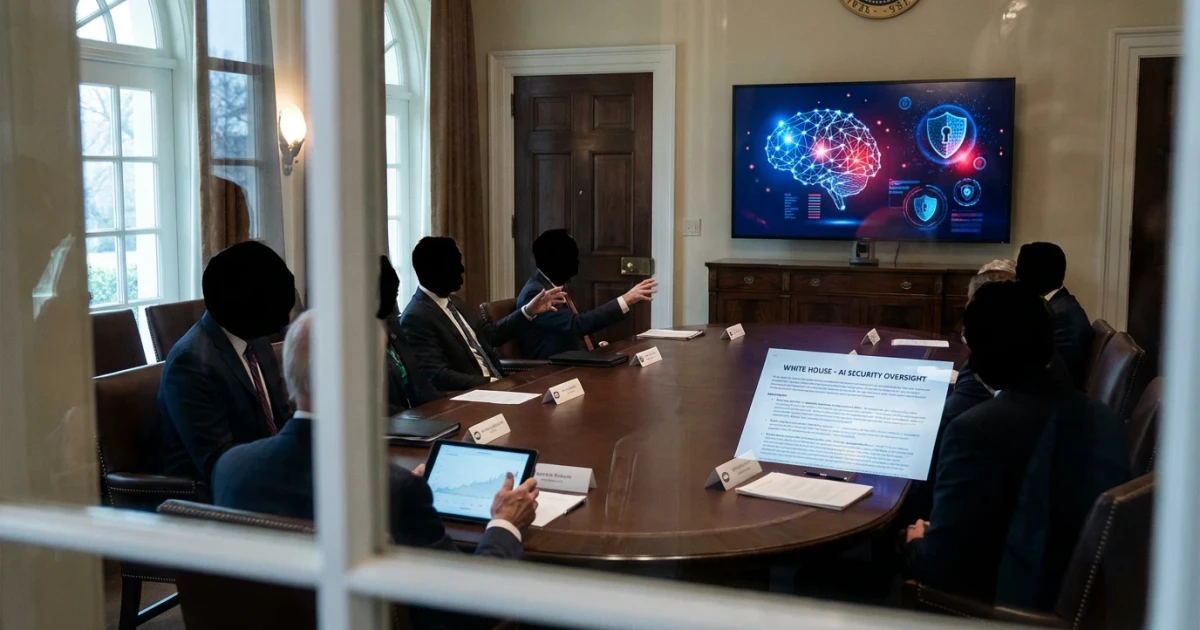

Anthropic CEO Dario Amodei traveled to the White House this week amid intensifying scrutiny of the company’s newest artificial intelligence model and widespread concerns about potential hacking and misuse. The visit highlights the growing pressure on AI developers to address security risks and collaborate with government officials as advanced systems enter the mainstream.

White House Engagement Signals Rising Concerns

Amodei’s trip comes as the White House intensifies its oversight of artificial intelligence, following a series of high-profile incidents and warnings from cybersecurity experts. According to The Washington Post, the administration has made AI safety a priority, with initiatives aimed at ensuring that new models undergo rigorous security testing before deployment.

Hacking Fears Surround Anthropic’s Model

The latest AI model from Anthropic, which has drawn attention for its advanced capabilities, has also raised alarms over potential vulnerabilities. Security researchers and policymakers worry that the model could be exploited for malicious purposes, from generating convincing phishing campaigns to enabling cyberattacks. Anthropic’s presence on a U.S. government blacklist, as noted by CNN, has only heightened the urgency of these discussions.

- The company’s model is viewed as a leader in generative AI, but also as a potential vector for new AI-driven incidents.

- Recent years have seen a sharp rise in the number of reported AI security breaches, according to data from the AI Safety Incident Database.

Regulatory Landscape and Industry Response

To address these risks, the U.S. government has issued new AI risk management guidelines and called for increased transparency from developers. The National Institute of Standards and Technology (NIST) and the Cybersecurity and Infrastructure Security Agency (CISA) have both released frameworks and roadmaps designed to help companies secure their AI systems and respond to emerging threats.

Anthropic, for its part, maintains that it is committed to robust security practices, as detailed in its security overview. The company says it regularly assesses its models for vulnerabilities and works with the broader AI ecosystem to share findings and improve safety standards.

Ongoing Debate Over AI Blacklists

The fact that Anthropic remains on a U.S. government blacklist adds complexity to the company’s interactions with federal officials. While blacklisting is typically associated with concerns over national security or compliance, it also reflects the government’s desire to closely monitor firms at the forefront of AI development. Multiple reports have noted that such measures can both motivate stronger internal controls and stoke debate within the industry over the best ways to balance innovation with safety.

What Comes Next?

As AI models continue to evolve in sophistication and reach, the dialogue between tech leaders and policymakers is expected to intensify. The outcome of Anthropic’s White House meetings may set important precedents for how the U.S. approaches not only AI security but also the regulation of rapidly advancing technologies.

For the public and industry alike, the case underscores an urgent reality: as artificial intelligence becomes more powerful, so too does the need for effective oversight, collaboration, and technical safeguards to ensure that innovation does not outpace safety.