Science

Journals Struggle as AI Floods Academic Publishing

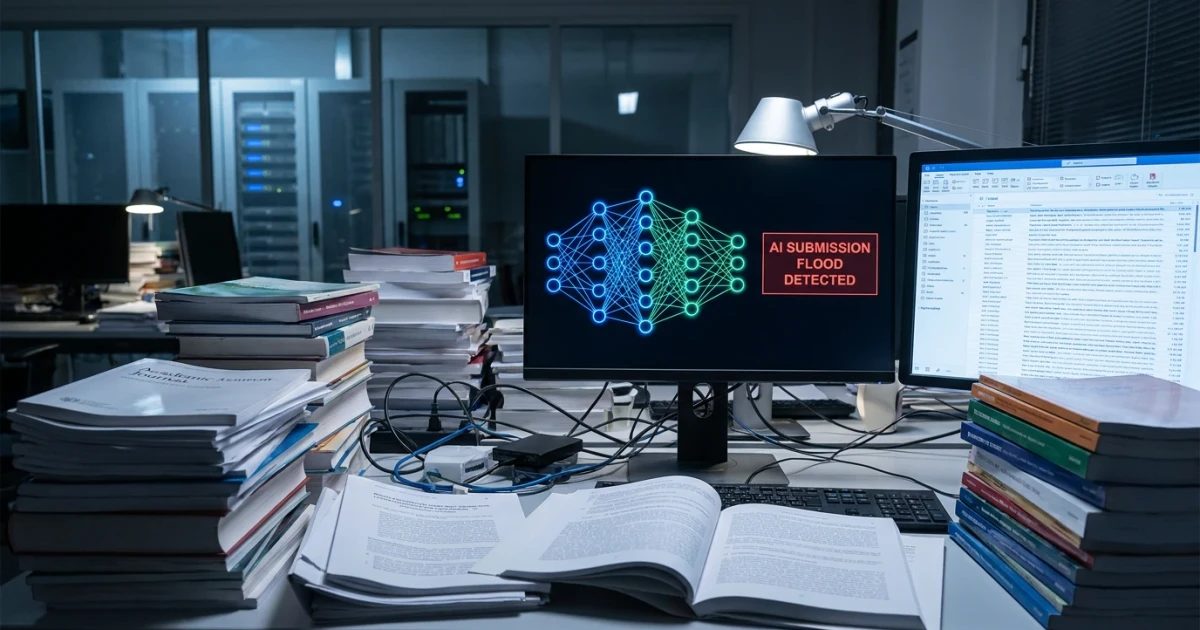

Academic publishing is facing unprecedented challenges as leading journals report a growing influx of AI-generated manuscripts. The rapid integration of artificial intelligence into research workflows has resulted in a surge of submissions, but experts warn that much of this content falls short in quality and transparency.

AI Submissions Overwhelm Journal Review Processes

According to Phys.org, a prominent academic journal has identified a marked increase in papers generated or heavily assisted by AI tools. Editors note that this trend is not limited to a single discipline—the impact is being felt across scientific, medical, and social science publications. The sheer volume of AI-generated manuscripts is straining traditional peer review systems and raising questions about the integrity of scholarly communication.

- Many journals have observed a spike in submissions with stylistic patterns and content structures indicative of AI assistance.

- Editors report that a significant portion of these papers lack scientific rigor or originality, leading to higher rejection rates.

- Some publishers are deploying advanced detection tools to identify AI-generated content, but these efforts remain imperfect.

Quality Concerns and Disclosure Gaps

One of the most pressing issues is the decline in manuscript quality. Phys.org highlights that reviewers are encountering more papers with superficial analysis, repetitive phrasing, and minimal contribution to the field. This phenomenon, sometimes referred to as "AI slop", threatens to dilute the value of published research.

Compounding the problem, current journal policies have failed to curb the undisclosed use of AI in manuscript preparation. Many authors do not disclose the extent to which AI tools contributed to their work, making it difficult for editors and reviewers to assess the authenticity and reliability of findings. The lack of transparency undermines trust in the peer review process and the published literature.

Policy Responses and Industry Challenges

Despite efforts to set guidelines for AI use, journals and publishers are struggling to enforce meaningful standards. Some have updated their author instructions to require disclosure of AI involvement, but compliance remains low. As Phys.org reports, these policies have so far failed to stem the tide of undisclosed and low-quality AI-generated submissions.

- Leading journals are collaborating with technology providers to improve detection and screening tools.

- Some publishers are considering stricter penalties for undisclosed AI use or for submitting poor-quality work.

- Industry groups are calling for coordinated standards, citing the need for clear definitions and robust enforcement mechanisms.

Looking Forward: Balancing Innovation and Integrity

The rapid adoption of AI in academic writing presents both opportunities and risks. While AI has the potential to streamline research workflows and enhance accessibility, unchecked use can compromise the reliability of published science. Journals, publishers, and the broader academic community face the urgent task of adapting policies, investing in detection technologies, and fostering a culture of transparency.

As the debate continues, experts stress the importance of maintaining rigorous standards to ensure that retractions and corrections remain rare—and that published research retains its value for future generations. Readers can explore official publisher guidance and recent industry analyses for deeper context on this evolving issue.

Ultimately, the academic publishing sector must strike a careful balance between leveraging new technologies and safeguarding the integrity of scholarly communication.